Meta lawsuits targeting the harm caused to teenagers on its platforms have intensified scrutiny on the company’s role in children’s online safety. These lawsuits allege that Meta, the parent company of Facebook and Instagram, knowingly allowed its apps to damage teen mental health, sparking a powerful demand for greater accountability.

Multiple legal actions have been filed against Meta, accusing it of prioritizing engagement and profit over the well-being of its youngest users. Internal documents leaked during these court cases reveal that Meta’s own research confirmed the negative effects Instagram had on teen girls’ mental health, including worsening body image issues and anxiety. Despite this knowledge, critics argue, the company failed to implement necessary safeguards or transparent parental controls.

The significance of these findings is underscored by court rulings that address similar social media harms. For context, the US Supreme Court rulings on social media cases provide a backdrop of growing judicial awareness about platform responsibility. These precedents highlight the evolving legal landscape where platforms are increasingly held accountable for user safety, particularly minors.

Analysis of the Meta internal documents shows a troubling gap between what the company understood about risks to teenagers and the practical measures it deployed. Experts note that these documents offer rare transparency into tech industry practices—normally shrouded in corporate confidentiality. One prominent child psychologist commented, “The disclosure of Meta’s internal findings is a crucial step toward understanding how social media impacts adolescent development and where interventions must be made.”

Teen users and some parents have shared their experiences to emphasize the real-world consequences behind the lawsuits. Stories include worsening depression linked to time spent on Instagram and pressure to meet unrealistic beauty standards perpetuated by algorithms. These testimonials amplify calls for stricter oversight and educational efforts about digital literacy.

Governments have responded with legislative initiatives to counterbalance the risks posed by social media giants. For example, the Kids Online Safety Act aims to establish more robust protections for children, mandating transparent reporting and the integration of safety features tailored to young users. Such laws represent a crucial leverage point in ensuring companies like Meta address systemic harms.

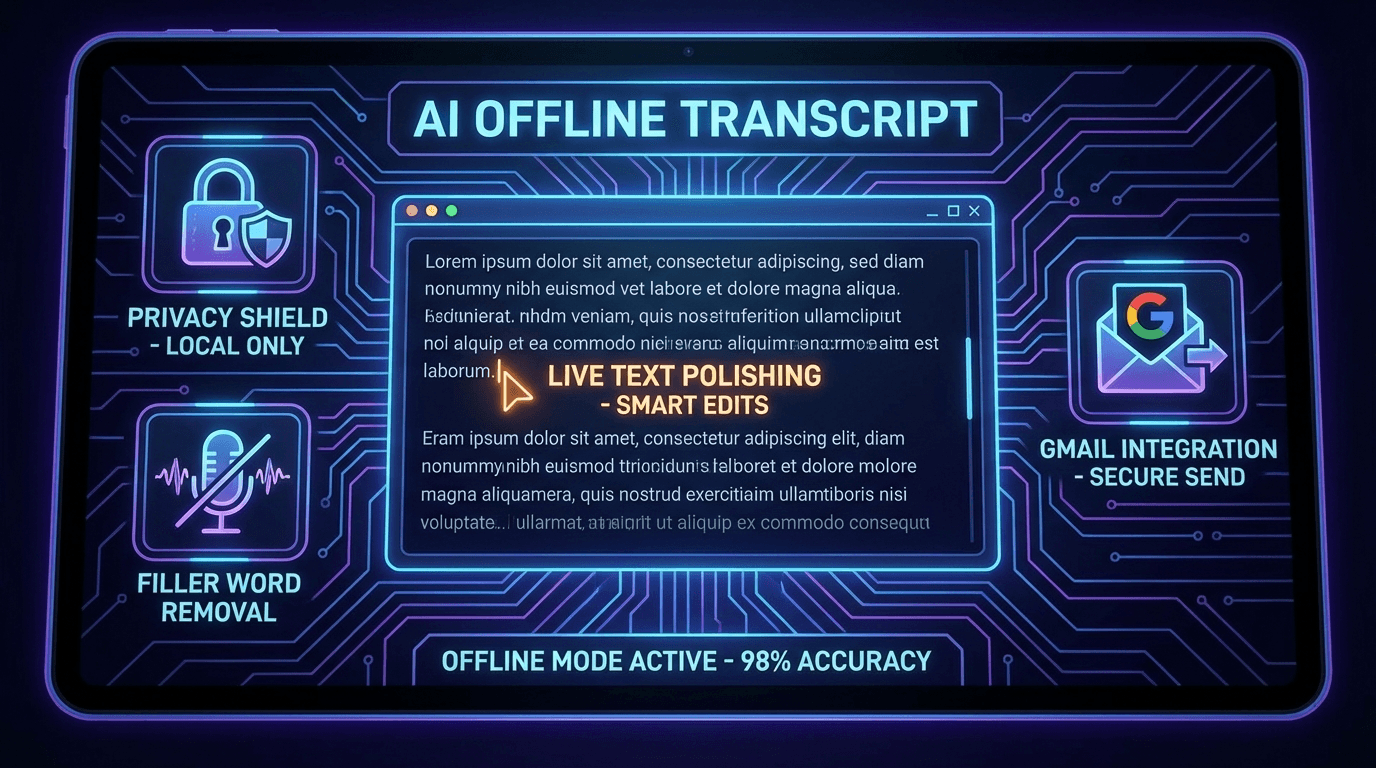

Alongside legal and legislative approaches, there is a growing focus on technological solutions and parental controls. Enhanced monitoring tools, time management features, and content filters are gradually becoming standard in apps targeting children and teens. However, critics argue these measures are not enough if they remain optional or are difficult for parents to use effectively.

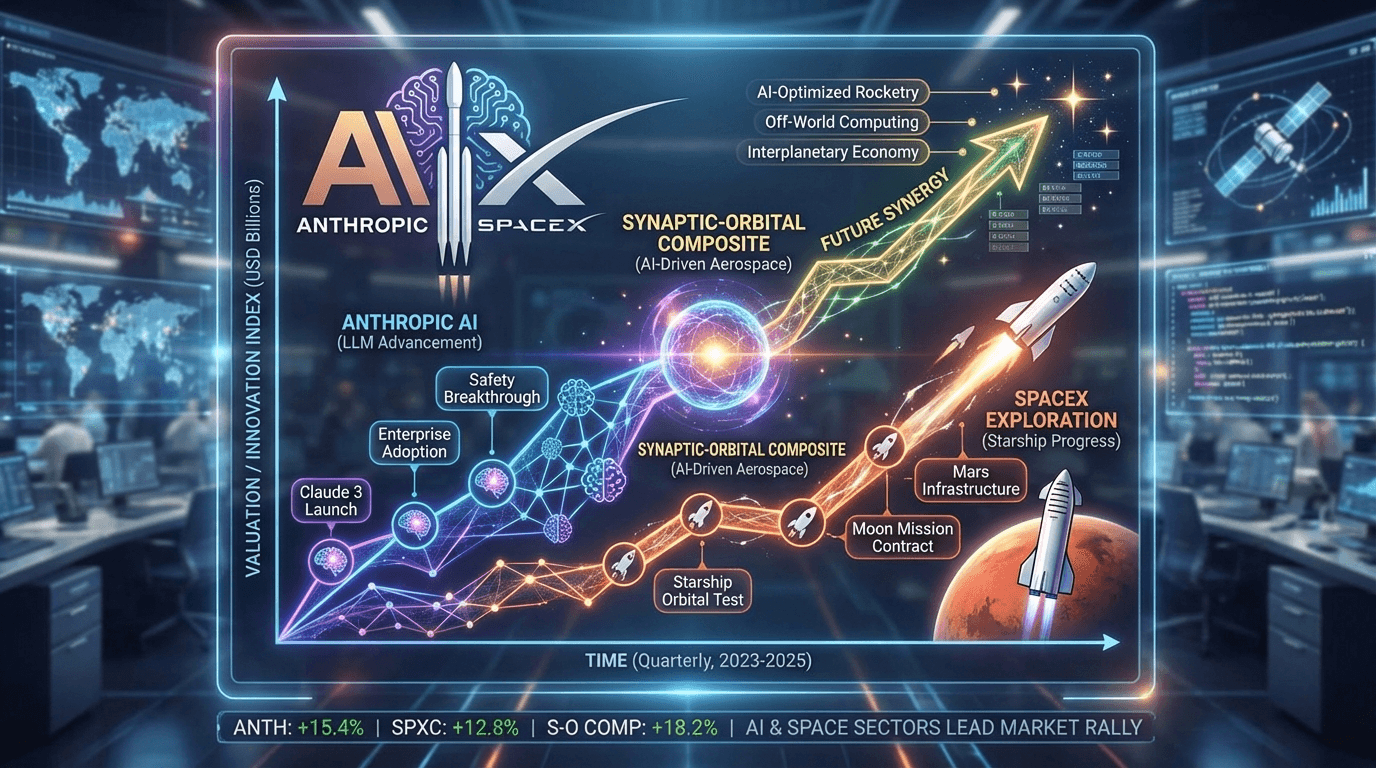

In the context of advancing AI technologies used by these platforms, understanding the impact on youth becomes even more critical. For those interested in the broader technological adoption landscape, reports such as the latest AI adoption statistics provide valuable insights into how automation and algorithms shape digital experiences, including risks and opportunities for safety interventions.

The Meta lawsuits centered on harm to teenagers reflect a confluence of legal battles, documented evidence, personal stories, and policy responses. This multifaceted struggle underscores a fundamental challenge: balancing technological innovation with ethical responsibility to protect vulnerable users. It also signals a turning point where long-standing concerns about children’s welfare in digital spaces are receiving concerted action from courts, lawmakers, and civil society.

As this legal saga continues, greater transparency and enforcement will be vital to prevent similar harms across platforms worldwide. The demand for accountability transcends national borders, highlighting the global nature of social media’s influence on youth. Ultimately, these cases could reshape how tech companies design and govern their products, potentially ushering in a new era of children’s online safety standards.

The unfolding outcomes from these lawsuits and legislative efforts will be essential for parents, policymakers, and legal professionals focused on social media impact litigation, teen safety concerns, and the ongoing evolution of protective measures for younger users.